It has been a long time since I last wrote something here, during these months two new things happened that are worth to be mentioned: first of all I’m really close to graduation!

Well, actually I need to pass the last exam and spend a period of at least four months of internship, nothing is sure now but I’m in close contact with a game developing company… 😉

The second thing is closely related to this post instead, a couple of months ago I began to convert, following M3xican’s advice, my OpenGL demos to object oriented C++.

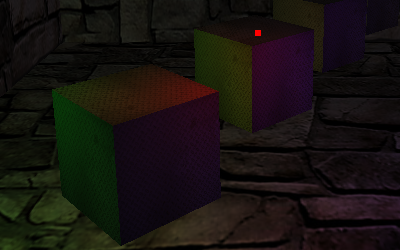

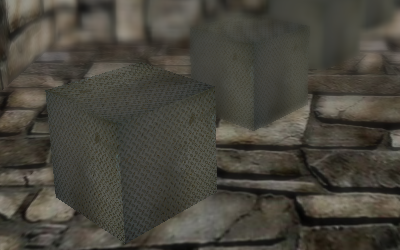

What I have now is really not much, nevertheless my class library can load a Stanford PLY model, it is ES 2.0 compliant (this means it will be easily converted to “Pure” OpenGL 3.x), and it can already display both parallax mapping and depth of field!

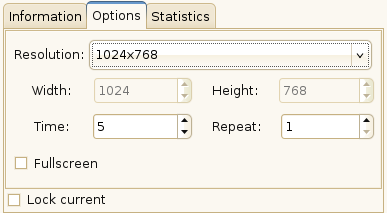

I’m not going to publish any screenshot by now because I think it’s not the time yet, what I’m showing you is a easy script, my first one, which I wrote yesterday night using the Blender Python API.

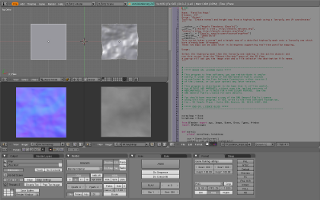

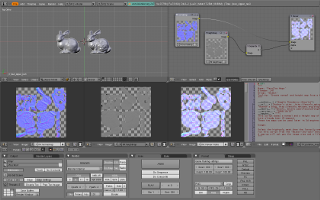

What it does is really simple yet time-saving, you select a high-poly and a low-poly model, run the script from the Object->Scripts menu and watch Blender baking your normal and height map and then saving them.

I have also set up an easy compositing nodes configuration to mix the two images in a single parallax map with height data encoded in the alpha channel.

You can download the script from here.

Everything is very simple (and funny!) with the astonishing power of Blender! 🙂