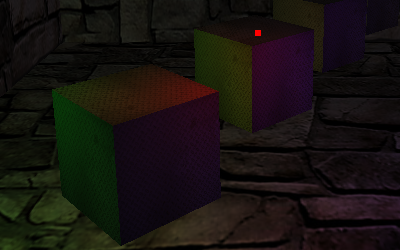

I started exploring deferred shading rendering to display multiple light sources and ended writing a demo featuring eight different lighting techniques and a PyOpenGL class library. 🙂

The whole story is more than a month old, just after releasing the first depth of field demo I began studying deferred shading, but I extended my purpose to include other lighting methods, like single and multi-pass fixed-pipeline lighting, per-vertex and per-pixel single and multi-pass shader lighting and, of course, deferred one.

While writing the C code, I thought it was going to be fun to also port it to Python, this way I could have also have a look to the “new” (ArchLinux adopted it quite late 🙂 ) ctypes PyOpenGL, aka PyOpenGL 3.

Unfortunately, many little but annoying issues delayed me until today:

- not setting explicitely glDepthFunc(GL_LEQUAL) (or, alternatively, not clearing the depth buffer at each pass) for multi-pass scene rendering made every pass to be discarded excepting the first one.

- trying to make a buggy Python glDrawBuffers() wrapper work.

Actually I had no luck with this and give up on MRTs support in PyOpenGL. - trying to figure out why VBOs didn’t work on PyOpenGL, I give up on this too. 🙂

- using a uniform variable to index the gl_LightSource structure array, which prevented the shader from running on Shader Model 3.0 cards

- exploring all the possibilities that could ever lead to “the brick room is very dark in fixed-pipeline mode” issue, only to discover today that this was a mere scaled normals problem.

It was easily solved enabling GL_RESCALE_NORMAL

At last I made it, I have made a multi light demo that includes deferred lighting (although very rough and not optimized at all) and shows coherent lighting in all rendering modes.

The PyOpenGL class library almost works, no MRTs and VBOs, but it is functional enough to sport a complete DoF2 and multilight (without deferred mode, which relies on MRTs, of course) demo conversions.

It’s not a news anymore that you can view it in action on my YouTube Channel, or in a high definition 720p version hosted on my Vimeo page.

All’s well that ends well. 🙂