Yeah, I was quite close to postpone the writing of this post to tomorrow, but I won’t. 🙂

The title is humorous but clear, my last demo deals again with deferred shading.

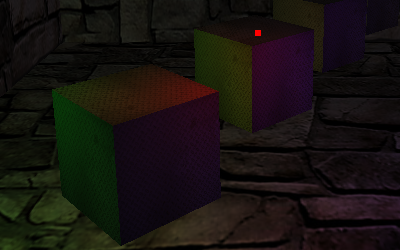

This time I made every effort to do things right: there is support for directional, point and spot lights and, much more important for performances, lights are rendered only inside their influence volumes using projective texturing.

This means that while directional ones affect everything and have to be rendered as full screen quads, point lights are only rendered as cubes and spot lights as pyramids.

This is slightly different from the usual approach taken, which uses spheres and cones but is also a bit more vertex heavy. 🙂

Rendering bounding shapes should barely affect performance, but makes the check for camera position a bit different.

The check is disabled by default as it is sufficient to cull front faces and invert the depth test to avoid double lighting issue and benefit from some depth optimization.

By enabling the check the application will expose my poor approximation of checking if the camera is inside a pyramid by using a formula meant for a cone, so better if it stays disabled, while the volume is not intersecting the far plane everything should behave as expected… 😀

The demo is also a bigger attempt into effects integrations, it will serve as a test bed for a more organized and high level framework.

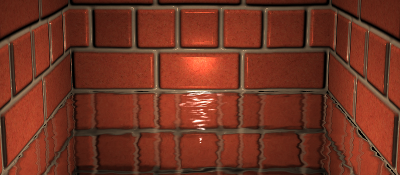

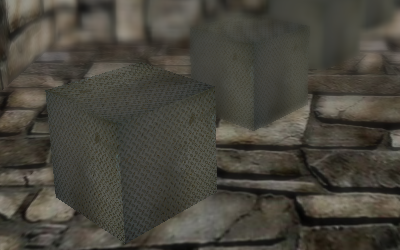

As a matter of fact it also features Screen Space Ambient Occlusion, Iterative Parallax Mapping and Depth of Field.

Very cool stuff, but I already have a list of things that I would like to implement sooner or later, like a Light Pre-Pass, a couple of advanced shadow mapping techniques, the integration of water rendering, HDR illumination and the reconstruction of position from depth.

I have published the sources and a video (Vimeo | YouTube), that for the first time comes with a nice soundtrack: it’s Nova Siberia by Big Giant Circles (Jimmy Hinson) from OverClocked ReMix! 😉

Note: I have had a hard time with glc, x264, mencoder and ffmpeg but still YouTube doesn’t accept my video together with the sound, at the moment I have uploaded a mute version.