If you have read my LinkedIn profile lately you should already know that, by now, some months have passed since I started my pre-graduation internship activity at Raylight (and since I signed my first NDA 😉 ).

The real-time graphic R&D work that I’m doing there for my thesis is enjoying and stimulating, but this is not the topic of the post…

Some days ago a 3d artist of the team, Alessandro, asked me a script that would have helped him using Blender for one more task along the company asset creation pipeline, weight painting.

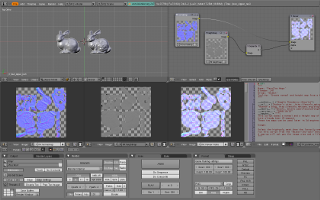

He needed a simple script to actually convert vertex weights to per-bone vertex colors layers, in order to bake them to per-bone UV maps and later import them inside 3d Studio Max.

At first I didn’t even know where to start, how to extract and match per-vertex weight data with per-face vertex color one, but my second try with it went as smoothly as honey.

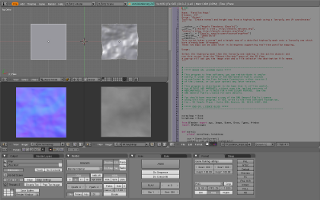

The core algorithm is, indeed, very simple:

for f in faces:

for i,v in enumerate(f):

infs = me.getVertexInfluences(v.index)

for vgroup, w in infs:

me.activeColorLayer = vgroup

col = f.col[i]

col.r = col.g = col.b = int(255*w)

Well, he has not yet taken advantage of it nor I know if he will ever use it, nevertheless the script is working and I have shared it on my site, as usual. 😉